Sequential Feature Selection (SFS) is available in theįorward-SFS is a greedy procedure that iteratively finds the best new feature Pixel importances with a parallel forest of trees: example Synthetic data showing the recovery of the actually meaningful shape (150, 2)įeature importances with a forest of trees: example on feature_importances_ array() > model = SelectFromModel ( clf, prefit = True ) > X_new = model. shape (150, 4) > clf = ExtraTreesClassifier ( n_estimators = 50 ) > clf = clf. > from sklearn.ensemble import ExtraTreesClassifier > from sklearn.datasets import load_iris > from sklearn.feature_selection import SelectFromModel > X, y = load_iris ( return_X_y = True ) > X. Impurity-based feature importances, which in turn can be used to discard irrelevantįeatures (when coupled with the SelectFromModel Of trees in the sklearn.ensemble module) can be used to compute

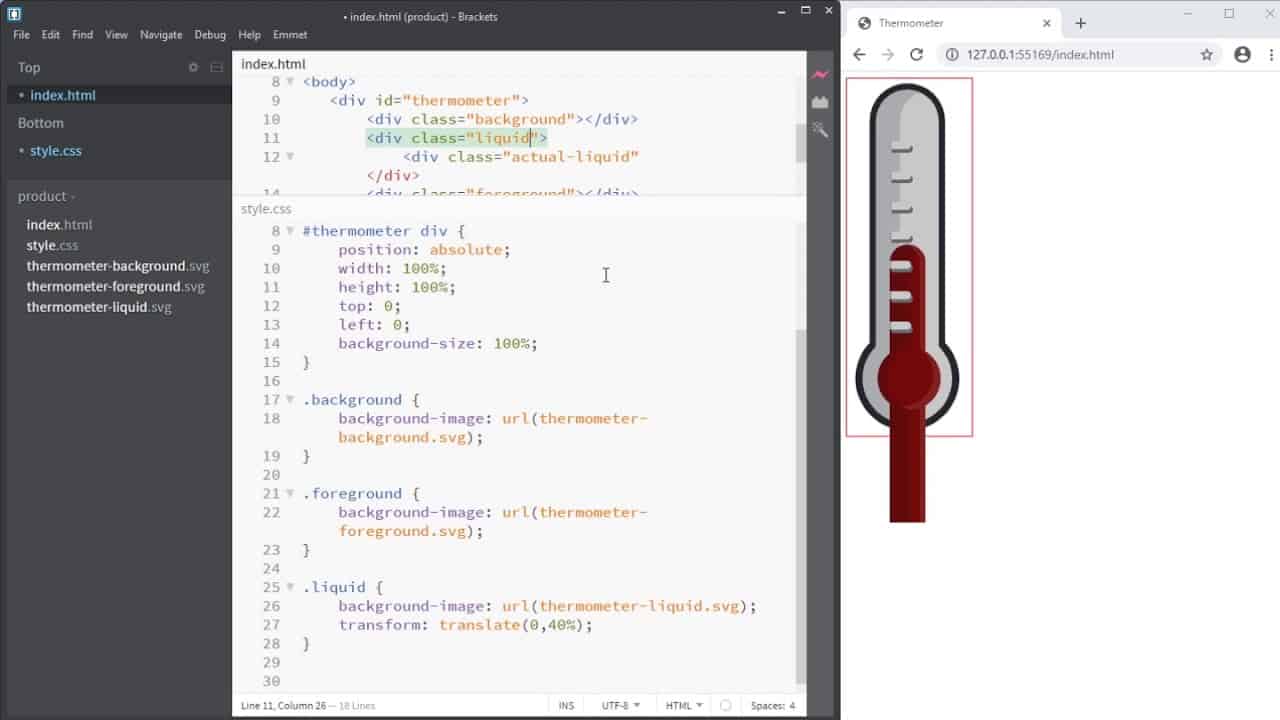

Tree-based estimators (see the ee module and forest Baraniuk “Compressive Sensing”, IEEE Signal ( LassoLarsIC) tends, on the opposite, to set high values of Variables is not detrimental to prediction score. Under-penalized models: including a small number of non-relevant ( LassoCV or LassoLarsCV), though this may lead to There is no general rule to select an alpha parameter for recovery of In addition, the design matrix mustĭisplay certain specific properties, such as not being too correlated. Noise, the smallest absolute value of non-zero coefficients, and the Random, where “sufficiently large” depends on the number of non-zeroĬoefficients, the logarithm of the number of features, the amount of Samples should be “sufficiently large”, or L1 models will perform at With Lasso, the higher theĪlpha parameter, the fewer features selected.įor a good choice of alpha, the Lasso can fully recover theĮxact set of non-zero variables using only few observations, providedĬertain specific conditions are met. The smaller C the fewer features selected. With SVMs and logistic-regression, the parameter C controls the sparsity: fit ( X, y ) > model = SelectFromModel ( lsvc, prefit = True ) > X_new = model. shape (150, 4) > lsvc = LinearSVC ( C = 0.01, penalty = "l1", dual = False ). > from sklearn.svm import LinearSVC > from sklearn.datasets import load_iris > from sklearn.feature_selection import SelectFromModel > X, y = load_iris ( return_X_y = True ) > X. In particular, sparse estimators usefulįor this purpose are the Lasso for regression, and They can be used along with SelectFromModel Is to reduce the dimensionality of the data to use with another classifier, Sparse solutions: many of their estimated coefficients are zero. Linear models penalized with the L1 norm have Model-based and sequential feature selection Max_features parameter to set a limit on the number of features to select.įor examples on how it is to be used refer to the sections below. In combination with the threshold criteria, one can use the There are built-in heuristics for finding a threshold using a string argument.Īvailable heuristics are “mean”, “median” and float multiples of these like Apart from specifying the threshold numerically, Importance of the feature values are below the provided The features are considered unimportant and removed if the corresponding SelectFromModel is a meta-transformer that can be used alongside anyĮstimator that assigns importance to each feature through a specific attribute (such asĬoef_, feature_importances_) or via an importance_getter callable after fitting. Feature selection using SelectFromModel ¶ Recursive feature elimination with cross-validation: A recursive featureĮlimination example with automatic tuning of the number of featuresġ.13.4. Showing the relevance of pixels in a digit classification task. Recursive feature elimination: A recursive feature elimination example RFECV performs RFE in a cross-validation loop to find the optimal Repeated on the pruned set until the desired number of features to select is Then, the least importantįeatures are pruned from current set of features. (such as coef_, feature_importances_) or callable.

The importance of each feature is obtained either through any specific attribute First, the estimator is trained on the initial set of features and Is to select features by recursively considering smaller and smaller sets ofįeatures. Given an external estimator that assigns weights to features (e.g., theĬoefficients of a linear model), the goal of recursive feature elimination ( RFE) Beware not to use a regression scoring function with a classificationĬomparison of F-test and mutual information